ChatGPT Hallucinations

ChatGPT Hallucinations: What They Are, Why They Happen, and How to Reduce Them

Artificial intelligence has made enormous progress in recent years. Tools like ChatGPT can write essays, generate code, summarize research, and even simulate conversation with remarkable fluency.

But there’s one major limitation you’ve probably encountered:

ChatGPT sometimes makes things up.

This phenomenon is known as AI hallucination.

In this guide, you’ll learn:

- What ChatGPT hallucinations actually are

- Why they happen

- Real examples

- When they’re most likely to occur

- How to reduce them using advanced prompt techniques

- Whether hallucinations will ever fully disappear

Let’s break it down clearly and practically.

What Are ChatGPT Hallucinations?

Quick Definition

A ChatGPT hallucination occurs when the AI generates false, misleading, or fabricated information while presenting it confidently as factual.

The model does not “know” it is hallucinating.

It is simply predicting text based on probability patterns.

Why Do Hallucinations Happen?

To understand hallucinations, you need to understand how large language models (LLMs) work.

ChatGPT:

- Does not “think”

- Does not access live internet data (unless specifically connected)

- Does not verify facts in real-time

- Predicts the next most statistically likely word

This means it generates answers based on learned patterns — not fact-checking.

When it lacks sufficient training context, it fills in gaps with plausible-sounding information.

That’s where hallucinations come from.

The Core Causes of AI Hallucinations

There are several main triggers.

1️⃣ Probability-Based Prediction

ChatGPT selects the most statistically probable next word — not the most accurate word.

If the model has partial information about a topic, it may “complete” it incorrectly.

2️⃣ Lack of Real-Time Knowledge

If you ask:

“What did Company X announce today?”

Without live retrieval systems, ChatGPT may fabricate a plausible answer.

3️⃣ Ambiguous Prompts

Vague prompts increase hallucination risk.

Example:

“Explain the Smith Algorithm.”

If multiple algorithms exist, or none exist clearly, the AI may invent details.

4️⃣ Overconfidence in Unknown Areas

If the model has limited training exposure to niche or obscure topics, it may generate synthetic details to maintain conversational flow.

5️⃣ High Creativity Settings

Higher temperature settings increase randomness.

Higher randomness = higher hallucination probability.

👉 Related: What Is Temperature in ChatGPT?

Examples of ChatGPT Hallucinations

Here are common real-world examples:

- Inventing fake academic citations

- Creating nonexistent book titles

- Fabricating court cases

- Providing incorrect statistics

- Misquoting historical figures

- Inventing URLs that look realistic

The output sounds professional and polished — which makes it dangerous if not verified.

When Are Hallucinations Most Likely?

Hallucinations are more common when:

- Asking about obscure or very recent events

- Requesting exact statistics

- Requesting legal citations

- Asking for medical or scientific claims

- Using broad or vague prompts

- Asking for highly specific documentation

They are less common when:

- Asking for general explanations

- Requesting creative writing

- Asking for structured frameworks

Are Hallucinations the Same as Lying?

No.

ChatGPT does not intentionally deceive.

It does not have intent.

Hallucinations are a byproduct of probability-based generation, not dishonesty.

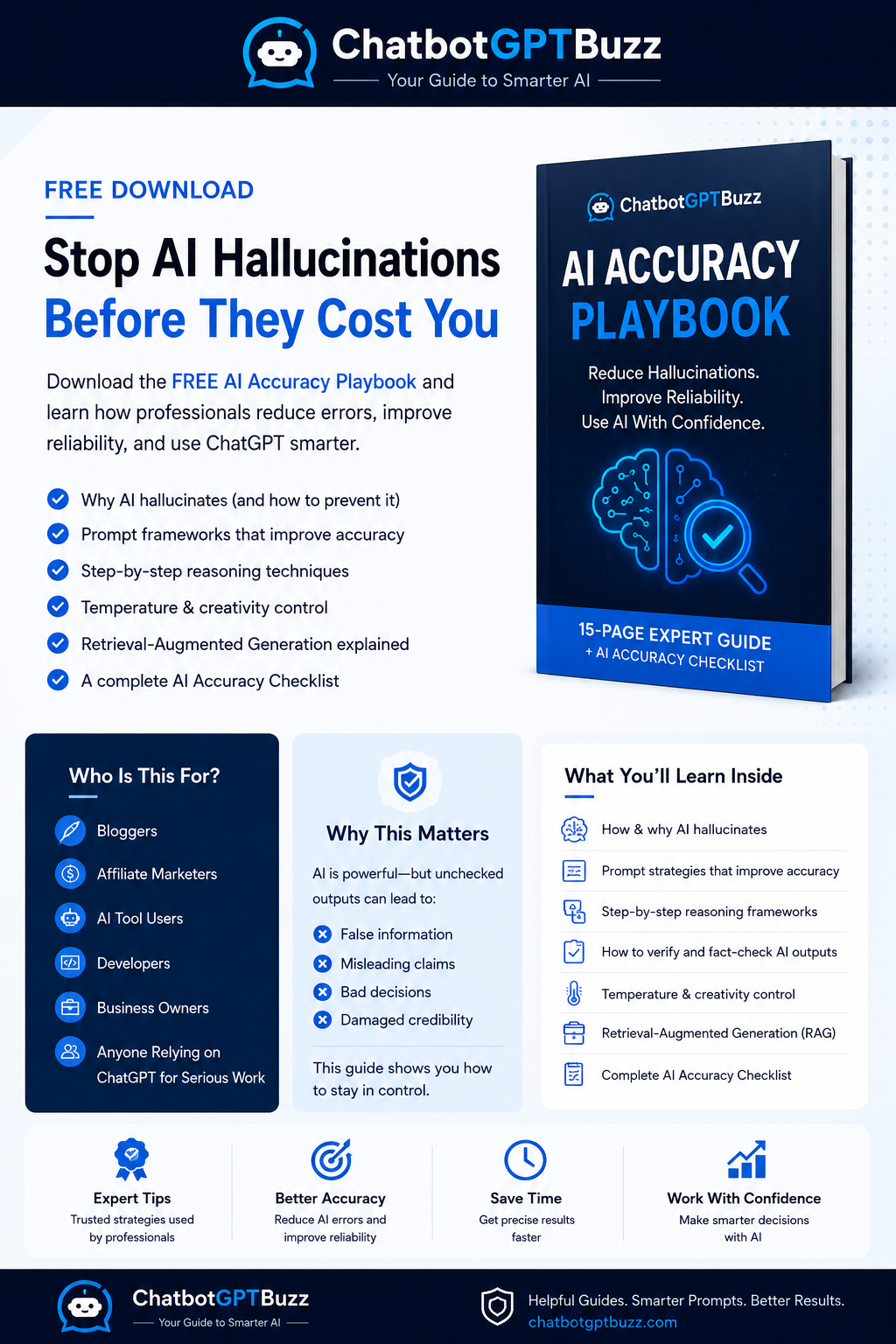

How to Reduce ChatGPT Hallucinations

While you cannot eliminate hallucinations completely, you can significantly reduce them.

Here’s how.

1️⃣ Use Explicit Accuracy Instructions

Add this line to your prompts:

“If you are unsure about any fact, say you are unsure rather than guessing.”

This simple constraint reduces fabricated confidence.

2️⃣ Ask for Sources — Carefully

Instead of:

“Give me sources.”

Use:

“List widely recognized sources without inventing citations. If unsure, state uncertainty.”

Otherwise, the AI may generate fake references.

3️⃣ Lower Temperature Settings (API Users)

Lower temperature = lower randomness.

For factual tasks, keep temperature between 0–0.3.

4️⃣ Break Tasks Into Steps

Instead of:

“Write a complete legal analysis.”

Try:

- Summarize relevant law

- List known precedents

- Identify uncertainties

Step-by-step prompting reduces guesswork.

👉 Related: Advanced Prompt Techniques

5️⃣ Use Retrieval-Augmented Generation (RAG)

RAG systems connect AI to external verified documents.

Instead of relying only on training data, the system:

- Retrieves relevant documents

- Injects them into the prompt

- Generates responses grounded in that content

This dramatically reduces hallucination risk.

👉 Related: What Is RAG in AI?

6️⃣ Ask the AI to Explain Its Reasoning

Example:

“Explain your reasoning step-by-step before giving the final answer.”

This increases logical transparency and exposes weak points.

7️⃣ Use Structured Output Requests

Structured prompts reduce ambiguity.

Example:

“Provide answer in bullet points. Avoid speculation. State assumptions clearly.”

Can Hallucinations Be Eliminated Completely?

Short answer: No.

Long answer:

Hallucinations are fundamentally tied to probabilistic text generation.

Even as models improve:

- Probability prediction remains core

- Absolute certainty cannot be guaranteed

- Creative flexibility requires some randomness

However, hallucination rates continue decreasing with:

- Larger training datasets

- Better alignment tuning

- RAG systems

- Improved prompting techniques

Are Hallucinations Dangerous?

They can be — depending on use case.

Low Risk:

- Creative writing

- Brainstorming

- Fiction

High Risk:

- Legal advice

- Medical information

- Financial recommendations

- Academic citations

Always verify high-stakes outputs independently.

How Professionals Use ChatGPT Safely

Professionals reduce risk by:

- Treating AI as a drafting tool

- Fact-checking critical claims

- Using AI for structure, not authority

- Keeping human review in the loop

AI should assist — not replace — expert judgment.

The Tradeoff: Creativity vs Accuracy

There is a balance.

Higher creativity:

- More varied output

- More idea generation

- Higher hallucination risk

Lower creativity:

- More predictable output

- Lower hallucination risk

- Less stylistic variation

Understanding this tradeoff is key to responsible AI usage.

ChatGPT Hallucinations vs Other AI Models

All large language models can hallucinate.

This includes:

- Claude

- Gemini

- LLaMA-based models

- Open-source LLMs

Hallucinations are not unique to ChatGPT.

They are inherent to generative models.

Future Outlook: Will AI Become Fully Reliable?

AI reliability is improving rapidly.

Advances include:

- Hybrid retrieval systems

- Multi-model validation

- Fact-checking layers

- Reinforcement learning improvements

But probabilistic generation will likely always carry some uncertainty.

The solution isn’t blind trust.

It’s intelligent usage.

Final Thoughts

ChatGPT hallucinations are not a flaw in the traditional sense — they are a side effect of how generative AI works.

Understanding this changes how you use AI:

- Ask better prompts

- Reduce ambiguity

- Verify critical facts

- Use structured techniques

- Combine AI with human judgment

When used correctly, ChatGPT remains an incredibly powerful productivity tool.

But accuracy always requires awareness.

Frequently Asked Questions

What causes ChatGPT hallucinations?

They occur because the model predicts statistically likely words rather than verifying facts in real time.

Can hallucinations be prevented completely?

No, but they can be significantly reduced through better prompting and retrieval systems.

Are hallucinations the same as lying?

No. The model has no intent — it generates text based on probability patterns.

Is ChatGPT reliable for professional work?

It can be, but high-stakes outputs should always be independently verified.

- ChatGPT Hallucinations

- ChatGPT Not Saving Conversations

- How ChatGPT Memory Works

- ChatGPT Keyboard Shortcuts To Save Time

- What Is RAG In AI Explained Simply

- What Is Token Pricing Understanding ChatGPT Costs

- What Is Temperature In ChatGPT

- Model Not Available In ChatGPT

- How to Fix ChatGPT API Key Invalid Error

- ChatGPT Not Saving Conversations

Leave a Reply